Vibe Coding with Canopy

March 31, 2026

by Rowland Jowett

In the blink of an eye, nine years have passed, and it’s time for me to move on to new pastures. What I’ll miss most about Canopy is working with such a high-calibre group of customers, people who constantly push simulation technology to meet the unique demands of their racing series.

The Early DAYS

Although we’ve always aimed to keep the software series-agnostic, bespoke work inevitably became part of what we deliver. In the early days, we said yes to everything (and inevitably let people down). To avoid disappointment, we later shifted to a more pragmatic approach: paid upgrades or feature-request upvotes. It wasn’t perfect, but it helped us prioritise fairly. After all, if we’re building things at no additional cost, you’d be mad not to ask.

Regretfully, there just isn’t enough time to turn the brilliant ideas from hundreds of great engineering minds into software… until now.

THE FUTURE IS HERE

You’ve no doubt felt pressure to weave AI into your workflow. Beyond making questionable memes, its clearest win right now is writing code. Some Canopy API users are already connecting to build bespoke applications, but with vibe coding this is easier and more accessible than ever. I’d like to share my experience.

I am not a coder. My university taught Matlab, a horrid, inefficient, expensive relic that infected racing teams. Python is better, but I’m a novice. Yet with vibe coding, interacting with the Canopy API is genuinely easy.

Let's Get to the Point

Here’s what I did (all free*, which satisfies the Yorkshireman in me):

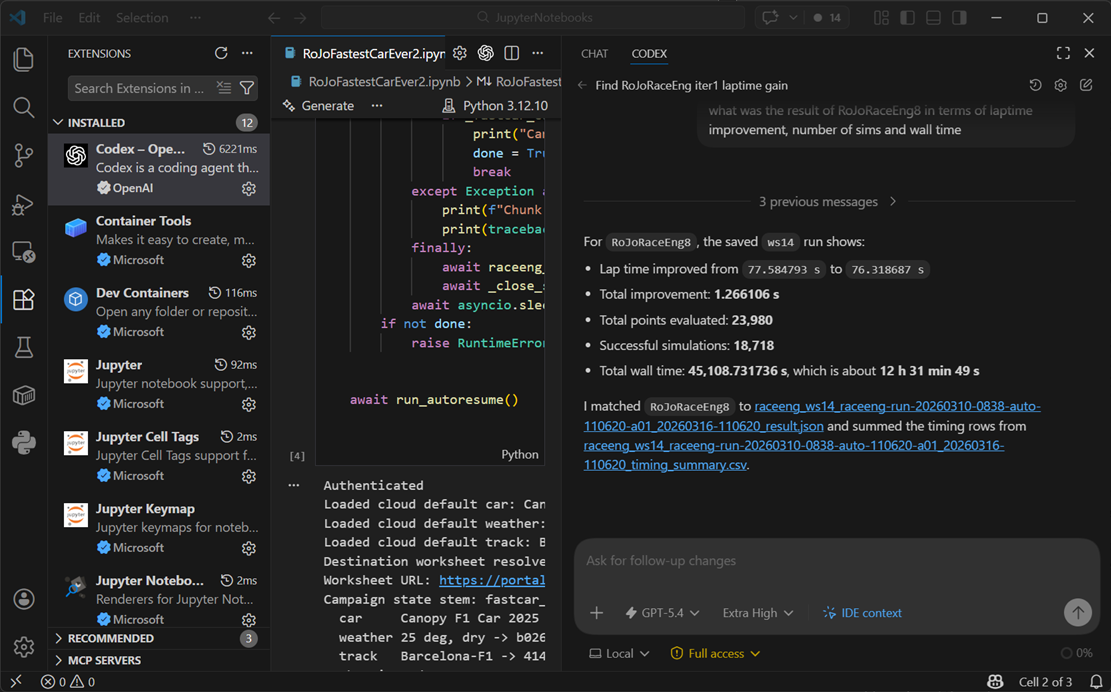

Install VS Code

Install Python

Add the VS Code extensions: Codex, Jupyter, Python

Download the Canopy Python examples into a local folder

Get your client ID, secret, username, and password from Canopy support

Give Codex full access (at your own risk!)

Ask Codex everything. If it errors, get it to fix it. Point it at examples if needed. *Pay OpenAI £20/month when you run out of tokens

Enjoy

Process

I completed the eight steps above in 1 hour 30 minutes and was running a Dynamic Lap via the API from the Codex prompt without even looking at the code. Not a bad place to start.

I quickly moved on to force, encourage, and cajole it to run studies inside worksheets to reduce mess, and asked it to run iterative exploration sweeps to optimise mCar to hit a target lap time. That’s not a bad first day for someone who has never touched the API or Python.

Most of my time was spent familiarising myself with VS Code, waiting for Canopy, and realising far too late that some code updates required me to close and re-open the Python Jupyter notebook. Which is why Codex was adamant it had fixed things, I was executing an old version of the code.

DAY 2 (Actually Day 1 Nightshift)

The dopamine hit of this productivity is real.

Encouraged by my progress, I had planned to start the next day by automating the Race Engineering Challenge. Instead, I couldn’t put this shiny new toy down and ended up pulling an all-nighter. It doesn’t even feel like work. You just point out flaws and let it iterate.

It succeeded well, but not well enough. So I moved on to refining the algorithm and fixing bugs.

DAY 2 ACTUAL

One advantage of vibe coding is that you’re not emotionally attached to “your” work. Free from the sunk cost fallacy, me and my new coding buddy went into planning mode and constructed a new algorithm, throwing away over 2,000 lines of code to start fresh.

The plan included:

Observations from iteration progress in Canopy worksheets

Speed insights, with a large compute pool 200 parallel sims take similar time to one

AI research into how similar optimisation problems are solved

A three-pronged attack on lap time, wall time, and compute credits, distilled into a one-page A4 spec of complex work.

Click send. Go for dinner.

Race Eng Challenge: Complete!

Having spent years praising the parallel coordinates chart for multi-parameter optimisation, I’ll admit that beyond about 6 parameters it becomes pretty useless. More dimensions make it harder to fit the scalar results surface and interpolate between sparse, noisy data.

A constrained optimisation with Dynamic Lap in the loop is clearly a better approach.

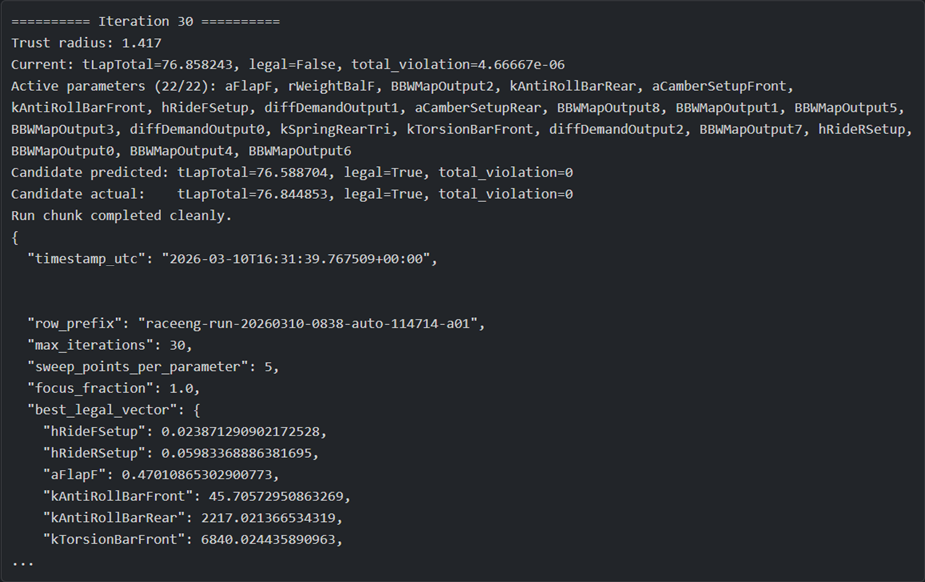

The challenge involved optimising 22 vehicle parameters, including ride heights, aero, springs, ARBs, weight distribution, diff, and brake balance, while respecting heuristic constraints such as balance, bottoming, camber, and stiffness.

For context, a full factorial at just 10 points each would be 10 sextillion sims.

The latest algorithm improved lap time from 77.59 seconds to 76.86 seconds, a gain of 0.73 seconds, all legal with no violations. There’s room for improvement. For example, it crept along towards the weight distribution limit where a human might jump straight to extremes. It was also slightly inefficient due to hard go or no-go constraints creating a knife edge.

That said, 3,330 sims and one day of wall time is a strong result.

The algorithm used single-parameter sweep gradient descent on tLapTotal and constraints, plus projection and superposition to find improvements.

Day 3: Complete F1 car design

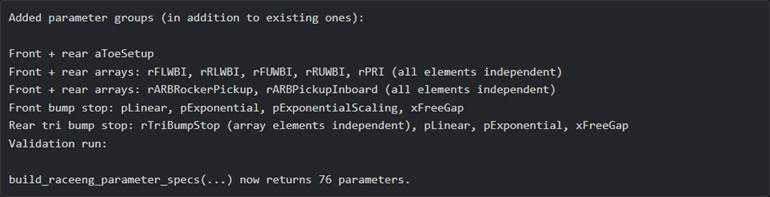

At this point, 22 parameters felt too easy for the might of GPT-5.4, so I escalated to a full F1 car redesign.

76 parameters in total, including toe, bump stops, and inboard pickup points. Essentially everything except tyres and powertrain.

GPT-5.4 correctly identified that coordinate parameters for suspension hard points should be treated as vector translations, and that lookup tables should be shaped rather than tweaked element by element.

Time to bin another 2,200 lines of code and rebuild.

Key changes:

Moved away from single-parameter gradient descent, which was too unreliable

Built a surrogate model to predict direction, more robust to noise

Used multi-dimensional explorations to capture interactions

Further refinements:

Robust save and resume if the API disconnects

ETA reporting

Async iteration so we do not wait for stragglers

Less checking of intermediate single sims

Parallel global searches

Repeat runs for noise filtering

Adaptive parameter ranges

Aggressive boundary exploitation

Soft to hard constraint transition

Second-stage refinement algorithm

Result: 76 parameters optimised in 12.5 hours

Gain: 1.27 seconds from 23,980 sims, using around 6,300 lines of Python

I’m not saying this is how you should design an F1 car, ignoring years of experience of suspension antis, roll centre height, rising rates, but with 1.27 seconds on the table I would absolutely try it in DiL..

Can AI Make a Supersonic Car? No-Rules NASCAR

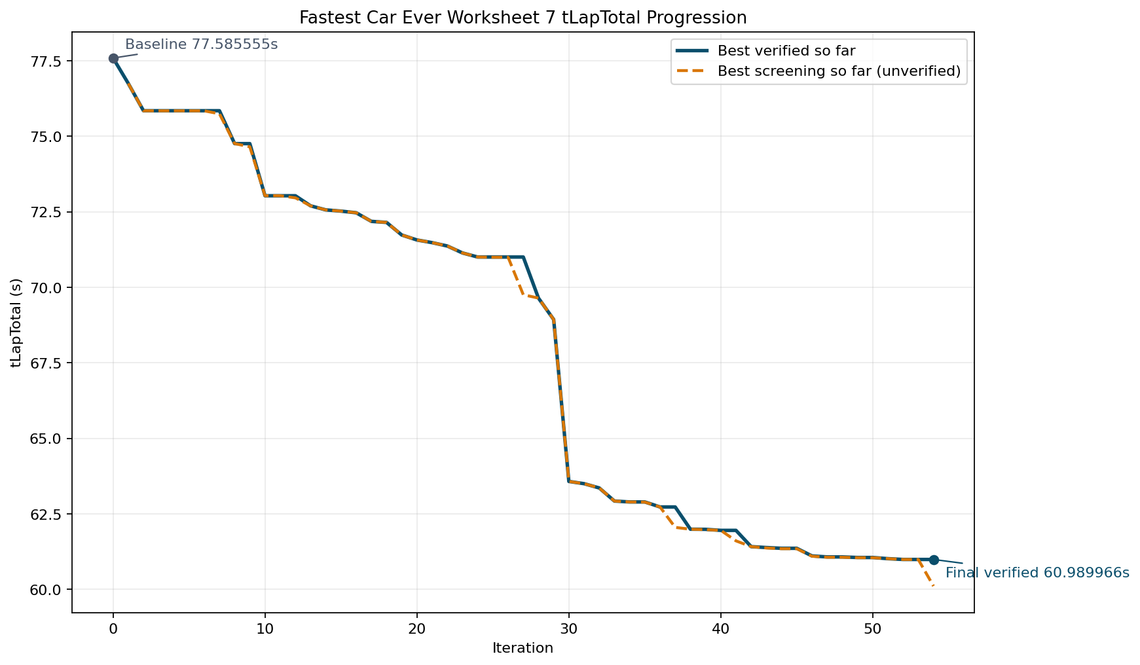

Still not satisfied, I removed all constraints to see if I could break my shiny new toy:

Only lap time at Barcelona matters

No physical limits

After some nerd sniping, Joe Story built a 10 kg, negative-drag, low-inertia monster.

Result: 29.9 seconds, 343 mph average, unconscious driver.

My algorithm had a 6-hour limit.

Result: 61 seconds. It did find some obvious candidates, grip, lift, drag and power but also changed some non-obvious ones.

A clear win for human intuition and deep knowledge of the model. I could keep refining the algorithm and let it crunch through the night, but at this point I’ve proven the point and I’m just playing.

Why This REALLY Matters

Canopy is well suited to the traditional workflow of running a sim, scrutinising the results using your VD knowledge, then deciding on what direction to go next.

Customers have pushed us to extract more value from a single simulation. You can now continuously optimise anti-roll bar, ride height, diff, weight jacker, brake balance and more. You can reduce setup dimensions, apply constraints to automatically re-balance the car and avoid wasted sims, and pull additional output channels from SLS. Instead of running sweeps to understand sensitivities, you can often get what you need from a single sim.

From there, the natural progression is to run explorations across a wider parameter space. At small scale, this works well. But as soon as you get to around six car parameters, the problem explodes. You are suddenly dealing with 1000 sims, and things start to break down in practice.

I’ll let you in on a secret. Some people are using Canopy this way, but not many. Why?

Cost

The current pricing model becomes a real constraint. Light includes 5000 credits per month. In a single week, I burned through 114,841 credits iterating on algorithms. That is a top-tier usage pattern, not something most users can justify.

Iteration friction

You run a large exploration, wait for it to complete, then realise you slightly missed the parameter range. Now you need to adjust and start again. That feedback loop is slow and frustrating, and it discourages experimentation.

Analysis bottleneck

Even if you do run 1000 sims, making sense of them is non-trivial. There is simply too much data to interpret efficiently without external tools or a lot of manual effort. The signal is there but extracting it is hard work.

In reality, most workflows still collapse back to something closer to manual iteration. This is where AI changes the equation.

AI is very good at handling large volumes of data, spotting patterns, and iterating towards an objective. Writing optimisation routines is not that far removed from how these models already operate internally. Instead of you manually deciding the next step, the loop becomes continuous, adaptive, and far more tolerant of scale.

The result is not just faster answers. It is a fundamentally different way of working.

Hyper-Personalised Tools

Back at McLaren F1, we had countless niche analysis, race preparation and support tasks. I’m rather envious because back then it was hard to justify the software dev budget, whereas now you could just build tools for them:

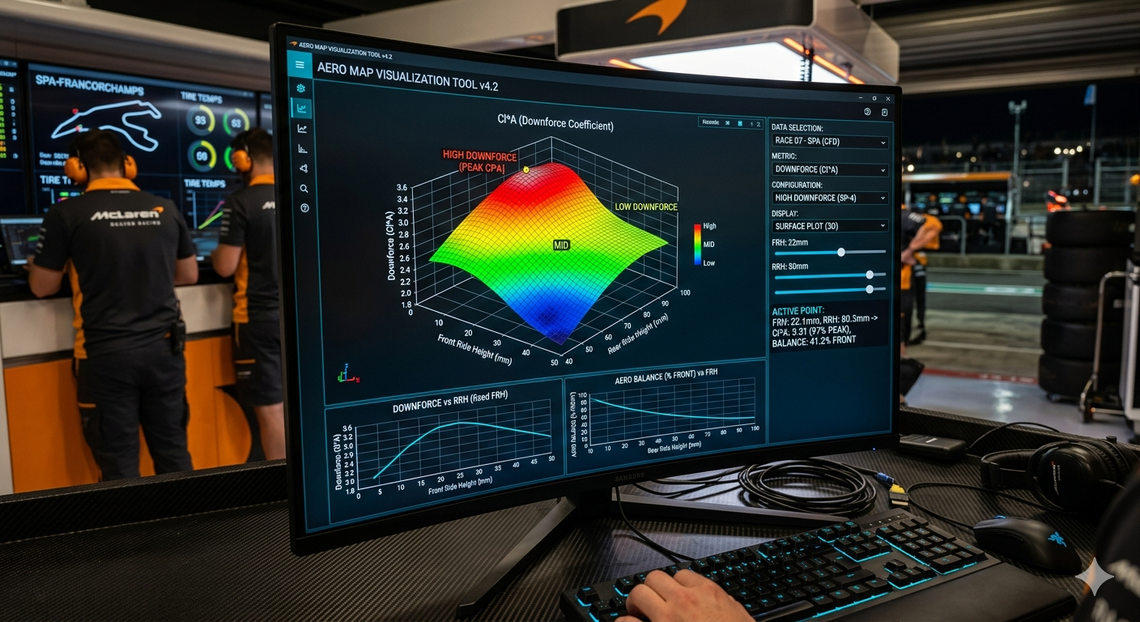

Tyre visualisation and tweaking

Aero map tools

Setup sheet automation

Correlation tools

Track designers

Generic constraints

Ideas can now become working prototypes quickly.

“Talk is cheap. Show me the code. So is AI code.”Linus Torvlds Rowland Jowett

From the Canopy side, we are already well placed for vibe coding and AI-driven add-ons: a robust physics model and optimiser, and rock-solid cloud computing, storage, and API. The UI is no longer a USP.

My Parting Gift

Medium and above users will be upgraded with enough sims to make this real.

Enjoy the future, and thanks for being you.

Rowland